A new phase for PauseAI

We're hiring for four paid positions.

We’re delighted to announce that PauseAI has received funding from the Future of Life Institute.

This significant boost to our funds will allow us to be bigger, better, and louder than ever before.

Our goal remains the same as it was when Joep founded PauseAI in May 2023 - to work towards a global pause on the development of frontier AI models. Over the last two years, we’ve grown to over 600 members, have established chapters in 13 countries, and are standing up to reckless AI development on multiple fronts.

In the US, PauseAI volunteers and others contacted their Senators to get the 10-year moratorium on state AI regulation removed from Trump’s Big Beautiful Bill. The provision was defeated by a 99-1 vote. Following Google DeepMind's violation of the Frontier AI Safety Commitments, PauseAI UK organised the largest AI safety protest ever outside DeepMind’s London office. We've since secured support from 60 politicians from over 10 parties for PauseAI's open letter.

We’ll now be taking our efforts to the next level. We’re hiring for four paid positions, including a Policy Director, leads of our UK and French chapters, and a Community Manager. You can find more details on these roles here.

Through 2025 and 2026, we’ll be focusing on continuing to grow our national chapters, running more PauseCon events across several countries, and reaching new audiences through targeted campaigns, social media content, and advertising.

AI lobbyists recently announced they’ll be spending over $100 million to fight AI regulation. They know they can’t beat us fairly. They know they’ll have to spend millions to have a chance of preventing regulation. As Rob Wiblin recently tweeted:

“The advantage the AI industry has is extraordinary amounts of money to blow on lobbying. The advantage its opponents have is that public opinion on AI is very negative and just unmobilised as yet. Unclear which hand you'd rather have to play.”

The polling is clear – the public do not want AI companies to be allowed to continue their race to superintelligence. Public opinion is on the side of common sense regulation. Let’s make it count.

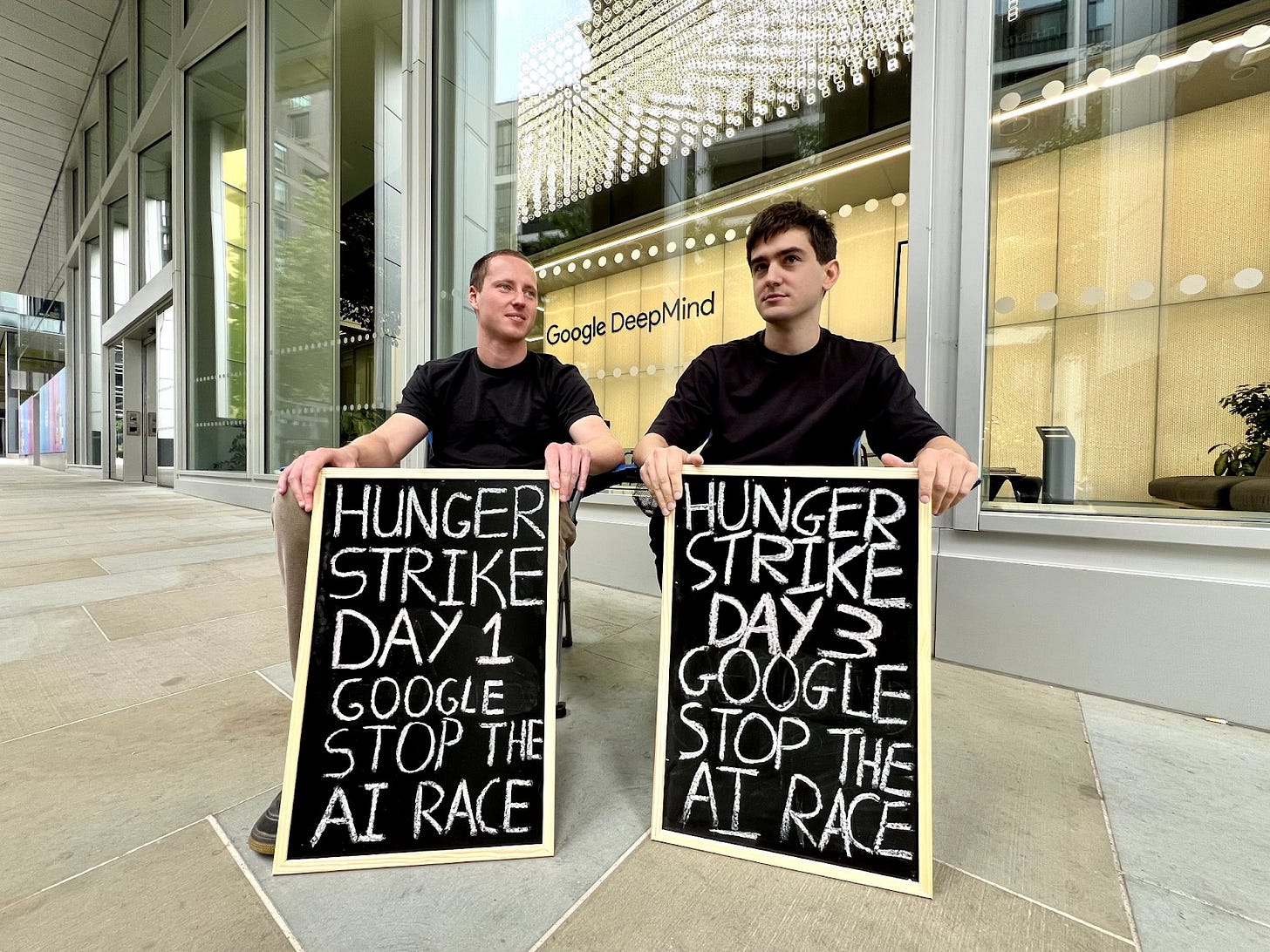

Hunger strikes at Google DeepMind and Anthropic

On Thursday the 4th, Guido Reichstadter announced he was on the third day of a hunger strike outside the offices of AI company Anthropic in San Francisco. He was demanding that Anthropic stop their “reckless actions”, and for the management and employees to do “everything in their power” to halt the race to artificial general intelligence.

On Friday the 5th, Michaël Trazzi independently announced he was beginning a hunger strike outside Google DeepMind’s office in London, also demanding an end to the race to AGI.

On Sunday the 7th, Denys Sheremet flew from Amsterdam to join Michael on his hunger strike.

PauseAI is not behind these hunger strikes – the three are acting on their own behalf. It's difficult to know which tactics are the most effective, but we have a huge amount of respect for anyone who is willing to do uncomfortable things to alert the public of the threat to their lives.

The hunger strikes have already generated a significant amount of media attention, and we hope they can bring Demis Hassabis and Dario Amodei out to explain why they’re continuing to race towards AGI, despite both of them being on record saying that it may kill every man, woman, and child on the planet. Perhaps more importantly, we hope ordinary people can see how serious this situation is and can turn their fear into action. We also hope politicians will recognise that they can’t afford to ignore this issue any longer and will step up to protect their constituents.

Open Letter to Google DeepMind

Our open letter demanding Google DeepMind address their violation of the Frontier AI Safety Commitments was signed by 60 UK politicians, with another MP adding their name this week, and was published in Time. We were delighted to see so many lawmakers across the political spectrum take a strong stance in favour of AI safety, and refuse to stand aside whilst AI companies break important promises.

You can see more details about the letter here.

Brussels PauseCon

Earlier this year, we held the inaugural PauseCon in London. Over 50 people attended over the weekend to listen to talks from the likes of Connor Leahy and panel discussions with Rob Miles, David Krueger, and other speakers, to take part in workshops on organising and activism, and to get to know others who are joining the movement against unregulated AI development.

We’re pleased to announce that the second PauseCon will be held in Brussels from the 11th to the 13th of December. As with PauseCon London, we’re able to offer optional accommodation to those who will be travelling into Brussels, so make sure to sign up here soon to secure your spot.

Contact our Organizing Director, Ella, if you’d like to present or have any particular thoughts about what you’d like to see.

PauseAI events in support of If Anyone Builds It, Everyone Dies

If Anyone Builds It, Everyone Dies has the potential to ignite public awareness of the extinction threat posed by AI, and cement its aversion as a top priority for politicians and voters the world over.

We’re hosting a series of events across several countries for people to unite in their desire to act.

Eliezer Yudkowsky and Nate Soares’ book clearly lays out the dangers of the unregulated race to superintelligent AI. To solve this problem, we must stare it in the face. We must inform others of this threat, and work with them to push forward regulation that will protect us.

It’s possible that the coming weeks will be the biggest moment for AI x-risk awareness since the release of the Future of Life Institute’s Pause letter. It’s up to us to make the most of that.

PauseAI UK is collaborating with ControlAI to host an unofficial launch party in London on the 22nd of September, with more events to come in the following weeks. You can find the events on our Luma calendar, with more to be announced soon.

Small Update to Our Statement

After some feedback, we’re making a minor adjustment to our public statement. Our position has not changed, this is just about clarity. The statement will now read as follows.

“We call on the governments of the world to sign an international treaty implementing a pause on the training of the most powerful general AI systems, until we know how to build them safely and keep them under democratic control.”

We have removed the word “temporary”, as some reasonably felt it suggested a prediction that the time it would take to satisfy the “until we know how to build them safely” condition would be quite short. Regardless of any individual’s thoughts on the difficulty of the alignment problem, PauseAI’s position has always been that, whilst it remains unsolved, we simply should not continue to race to develop increasingly powerful and uncontrollable AI.

Over 700 of you have already signed the statement, and, as we’ve made a minor adjustment, you’re welcome to send an email to info@pauseai.info if you wish to remove your name.

Other news

The Chinese ambassador to the US calls for international cooperation on AI governance to avoid “opening Pandora’s box”.

AI lobbyists announced they’ll be spending over $100 million to fight AI regulation.

OpenAI released their latest model, GPT-5, and despite some talk of an “AI plateau”, GPT-5 was right on trend for METR’s time horizon benchmark, accomplishing 50% of tasks that take a human 2 hours and 17 minutes to complete, a figure which is doubling once every 7 months (although that may have shortened to just 4 months)

Californian AI safety bill SB 53 will likely soon go to Governor Newsom for approval, and has received an endorsement from Anthropic

Liz Kendall replaces Peter Kyle as the UK’s tech minister

What we’ve been watching/reading

Roman Yampolskiy appeared on Steven Bartlett’s podcast to discuss the extinction threat posed by superintelligent AI, and suggest that people join organisations like PauseAI if they wish to take action

YouTuber Professor Dave Explains - “Will Artificial Intelligence Destroy Humanity?”, a video made in collaboration with ControlAI

Interviews with those on hunger strikes:

One from me (Tom) on Day 3 of Michael’s hunger strike

Siliconversations on FLI’s AI Safety Index

Billy Perrigo in Time - The Race for Artificial General Intelligence Poses New Risks to an Unstable World

A video from YouTube channel Species | Documenting AGI on how AI companies manufacture fear about ‘losing the race’ to China to downplay the need for regulation

Do you think that China etc. Will pay any attention to any AI issues/regulations?

The Luma event isn't working for me